What's New In Speak - April 2024

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Large language models are powerful tools used by researchers, companies, and organizations to process and analyze large volumes of text. These models are capable of understanding natural language and can be used to identify meanings, relationships, and patterns in text-based data.

They are used in areas such as natural language processing (NLP), sentiment analysis, text classification, text generation, image generation, video generation, question-answering and more. In this short piece, we will explore what large language models are, how they work, and their applications.

Large language models are models that use deep learning algorithms to process large amounts of text. They are designed to understand the structure of natural language and to pick out meanings and relationships between words. These models are capable of understanding context, identifying and extracting information from text, and making predictions about a text’s content.

They are trained on large datasets, such as the Common Crawl corpus and Wikipedia, to learn the structure and nuances of natural language. This allows them to generate new text in a similar style to the training data. They are also used to identify patterns in text and to classify documents into different categories.

There are still limited guides and resources on how large language models work. Here is a video on "Large Language Models From Scratch" by Graphics in 5 Minutes.

Large language models are based on neural networks, which are networks of artificial neurons connected together in layers. Each neuron can receive inputs from other neurons and produce an output. The output of each neuron is determined by its weights, which are adjusted as the model is trained.

Large language models are composed of several layers of neurons. The first layer takes in a sequence of words as input, and each subsequent layer processes the output of the previous layer. The output of the last layer is the model’s prediction of the most likely meaning or interpretation of the input.

The model is trained using a large dataset of text. During training, the model adjusts the weights of its neurons to better identify the relationships between words. This allows it to better understand the context of the text and make more accurate predictions.

Large language models are used in a wide variety of applications. They are used to generate natural-sounding text, such as in chatbots and virtual assistants.

We've seen explosions of text generation functions within large language models from companies like OpenAI, Jasper, and Copy Ai.

We've also seen a rampant increase in the application of text-to-image generation from companies like Stability Ai, Midjourney, OpenAI and more.

Large language models are also used to identify the sentiment of text, such as in sentiment analysis. They can be used to classify documents into categories, such as in text classification tasks. They are also used in question-answering systems, such as in customer service applications.

Large language models are powerful tools used to process and analyze large amounts of text. They are based on deep learning algorithms and are trained on large datasets to learn the structure of natural language.

If you are interested, you can also check out some of the best large language models available today.

To start your transcription and analysis, you first need to create a Speak account. No worries, this is super easy to do!

Get a 7-day trial with 30 minutes of free English audio and video transcription included when you sign up for Speak.

To sign up for Speak and start using Speak Magic Prompts, visit the Speak app register page here.

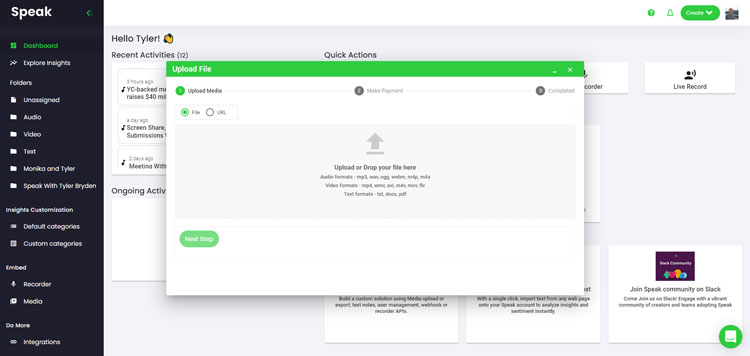

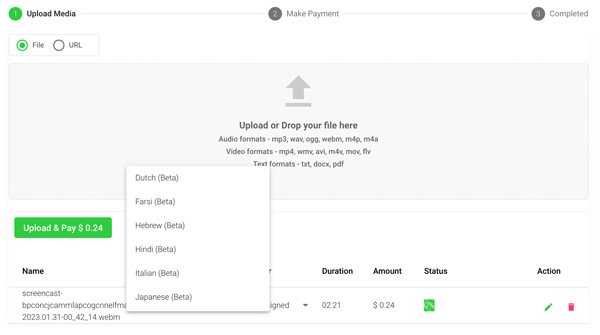

We typically recommend MP4s for video or MP3s for audio.

However, we accept a range of audio, video and text file types.

You can upload your file for transcription in several ways using Speak:

You can also upload CSVs of text files or audio and video files. You can learn more about CSV uploads and download Speak-compatible CSVs here.

With the CSVs, you can upload anything from dozens of YouTube videos to thousands of Interview Data.

You can also upload media to Speak through a publicly available URL.

As long as the file type extension is available at the end of the URL you will have no problem importing your recording for automatic transcription and analysis.

Speak is compatible with YouTube videos. All you have to do is copy the URL of the YouTube video (for example, https://www.youtube.com/watch?v=qKfcLcHeivc).

Speak will automatically find the file, calculate the length, and import the video.

If using YouTube videos, please make sure you use the full link and not the shortened YouTube snippet. Additionally, make sure you remove the channel name from the URL.

As mentioned, Speak also contains a range of integrations for Zoom, Zapier, Vimeo and more that will help you automatically transcribe your media.

This library of integrations continues to grow! Have a request? Feel encouraged to send us a message.

Once you have your file(s) ready and load it into Speak, it will automatically calculate the total cost (you get 30 minutes of audio and video free in the 7-day trial - take advantage of it!).

If you are uploading text data into Speak, you do not currently have to pay any cost. Only the Speak Magic Prompts analysis would create a fee which will be detailed below.

Once you go over your 30 minutes or need to use Speak Magic Prompts, you can pay by subscribing to a personalized plan using our real-time calculator.

You can also add a balance or pay for uploads and analysis without a plan using your credit card.

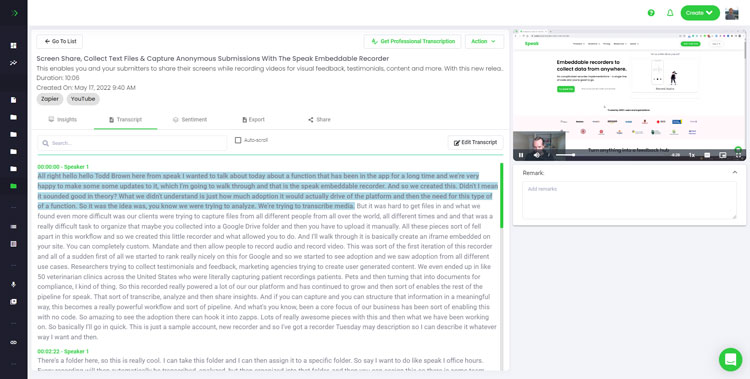

If you are uploading audio and video, our automated transcription software will prepare your transcript quickly. Once completed, you will get an email notification that your transcript is complete. That email will contain a link back to the file so you can access the interactive media player with the transcript, analysis, and export formats ready for you.

If you are importing CSVs or uploading text files Speak will generally analyze the information much more quickly.

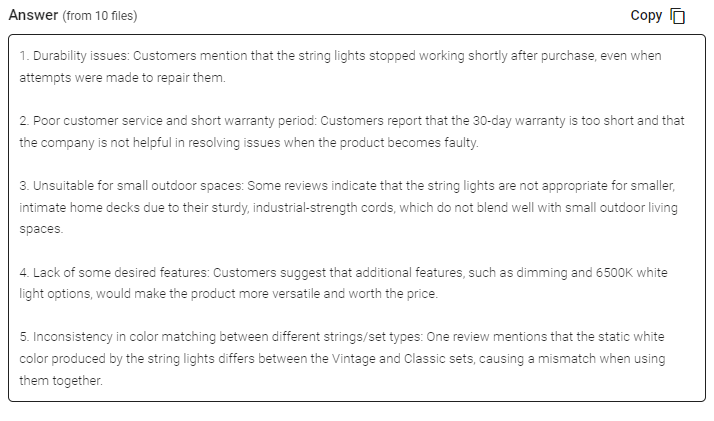

Speak is capable of analyzing both individual files and entire folders of data.

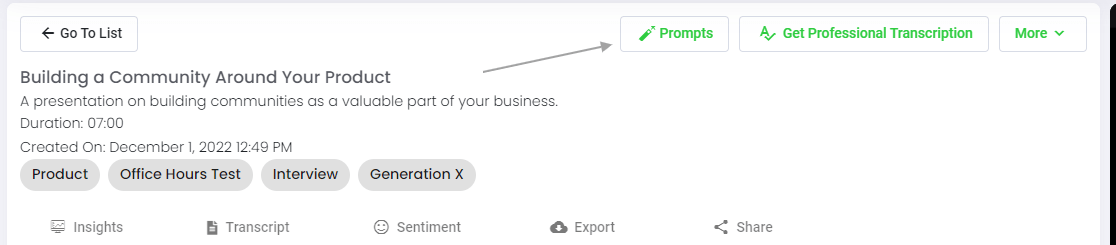

When you are viewing any individual file in Speak, all you have to do is click on the "Prompts" button.

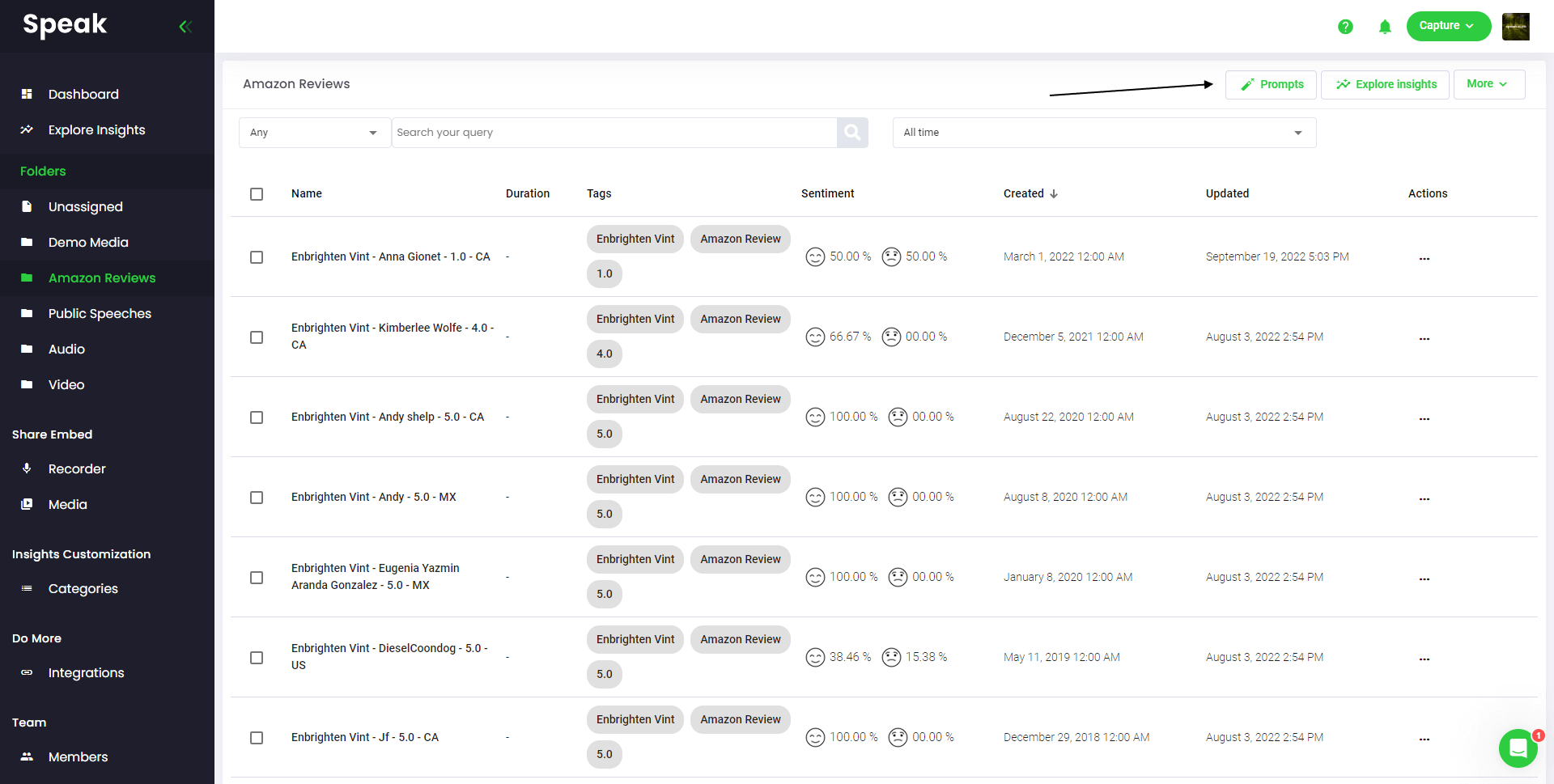

If you want to analyze many files, all you have to do is add the files you want to analyze into a folder within Speak.

You can do that by adding new files into Speak or you can organize your current files into your desired folder with the software's easy editing functionality.

Speak Magic Prompts leverage innovation in artificial intelligence models often referred to as "generative AI".

These models have analyzed huge amounts of data from across the internet to gain an understanding of language.

With that understanding, these "large language models" are capable of performing mind-bending tasks!

With Speak Magic Prompts, you can now perform those tasks on the audio, video and text data in your Speak account.

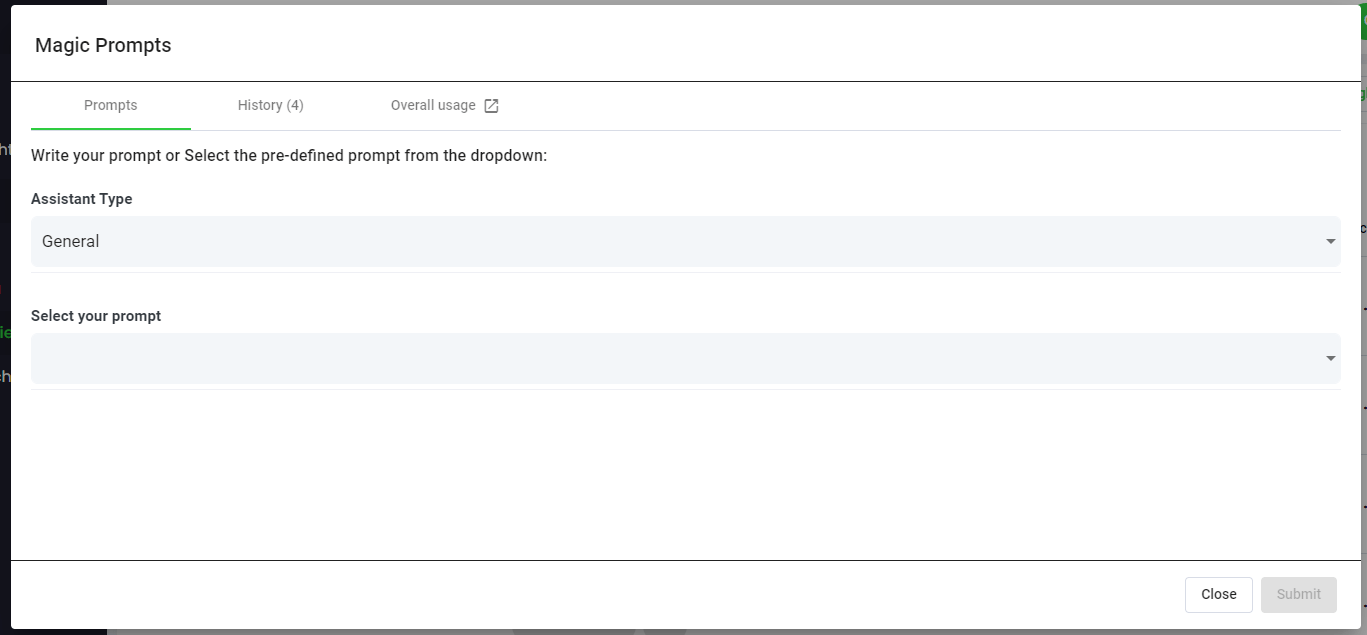

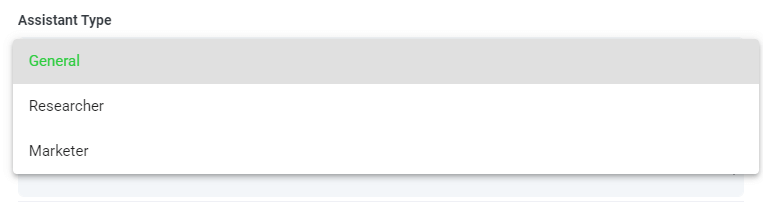

To help you get better results from Speak Magic Prompts, Speak has introduced "Assistant Type".

These assistant types pre-set and provide context to the prompt engine for more concise, meaningful outputs based on your needs.

To begin, we have included:

Choose the most relevant assistant type from the dropdown.

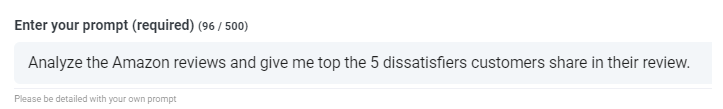

Here are some examples prompts that you can apply to any file right now:

A modal will pop up so you can use the suggested prompts we shared above to instantly and magically get your answers.

If you have your own prompts you want to create, select "Custom Prompt" from the dropdown and another text box will open where you can ask anything you want of your data!

Speak will generate a concise response for you in a text box below the prompt selection dropdown.

In this example, we ask to analyze all the Interview Data in the folder at once for the top product dissatisfiers.

You can easily copy that response for your presentations, content, emails, team members and more!

Our team at Speak Ai continues to optimize the pricing for Magic Prompts and Speak as a whole.

Right now, anyone in the 7-day trial of Speak gets 100,000 characters included in their account.

If you need more characters, you can easily include Speak Magic Prompts in your plan when you create a subscription.

You can also upgrade the number of characters in your account if you already have a subscription.

Both options are available on the subscription page.

Alternatively, you can use Speak Magic Prompts by adding a balance to your account. The balance will be used as you analyze characters.

Here at Speak, we've made it incredibly easy to personalize your subscription.

Once you sign-up, just visit our custom plan builder and select the media volume, team size, and features you want to get a plan that fits your needs.

No more rigid plans. Upgrade, downgrade or cancel at any time.

When you subscribe, you will also get a free premium add-on for three months!

That means you save up to $50 USD per month and $150 USD in total.

Once you subscribe to a plan, all you have to do is send us a live chat with your selected premium add-on from the list below:

We will put the add-on live in your account free of charge!

What are you waiting for?

If you have friends, peers and followers interested in using our platform, you can earn real monthly money.

You will get paid a percentage of all sales whether the customers you refer to pay for a plan, automatically transcribe media or leverage professional transcription services.

Use this link to become an official Speak affiliate.

It would be an honour to personally jump on an introductory call with you to make sure you are set up for success.

Just use our Calendly link to find a time that works well for you. We look forward to meeting you!

Get a 7-day fully-featured trial.

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Thank you for continuing to be part of this journey - it means the world to us. Below is a summary of our 2023 at

Interested in The Best Executive Research Firms? Check out the dedicated article the Speak Ai team put together on The Best Executive Research Firms to learn more.

Interested in The Best Consumer Research Firms? Check out the dedicated article the Speak Ai team put together on The Best Consumer Research Firms to learn more.

Powered by Speak Ai Inc. Made in Canada with

Use Speak's powerful AI to transcribe, analyze, automate and produce incredible insights for you and your team.