What's New In Speak - April 2024

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

When you're analyzing qualitative feedback and using it to improve your product, it's important to understand that there are a lot of different types of qualitative data out there. Each type can be useful in its way, so it's important to know how to analyze and interpret the information from each type.

Qualitative feedback is often misunderstood and misused despite its relevance.

An organization's ability to collect meaningful and trustworthy quantitative data must be weighed against the value of actively listening to the people it serves. To learn how to effectively assist clients, employees, and other important stakeholders, qualitative comments are crucial. Well-executed qualitative evaluations can yield invaluable insights that inform programmatic enhancements.

However, collecting qualitative data and making sense of it can be challenging.

That is why we'll show you how to choose and analyze qualitative feedback. Read this article in its entirety, and you will leave with a firm grasp of how to use qualitative feedback in your company strategy.

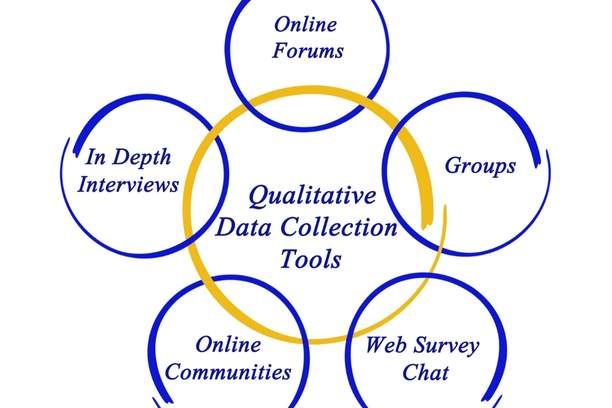

The term "qualitative feedback" is used to describe responses that cannot be reduced to a measurable set of numbers. It may be used by businesses to get insights into customers' perspectives, preferences, and experiences. It can be gathered by employing in-depth discussions, focus groups, and free-form surveys.

A good way to understand qualitative feedback is to think of it as a way for you to get more information about your users. It can be used for a variety of different purposes, but the basic idea is that you’re doing something with the data to gain insight into what people are thinking and why they do what they do.

Qualitative feedback methods are often used in conjunction with quantitative data. They provide more context about what’s happening in user behavior, which can help you make better decisions about product development or design. For example, if you have a survey on your website asking users if they like a new feature or not, that isn’t going to give you the full picture of how they feel about it — there might be many reasons why someone would say no. Maybe it took too long to load, or maybe they didn’t understand how to use it correctly. With qualitative feedback methods, we can dig deeper into these questions and find out exactly why people don’t like something so we can improve it!

Qualitative feedback is the ability to describe what you see and hear, or the qualities of something. Qualitative feedback is different from quantitative feedback, which involves numbers, statistics, and data.

In contrast to quantitative data, qualitative information provides an in-depth understanding. You can use it to enhance a company's offerings in terms of both products and services.Researchers may discover customer insights in any number of places, including the internet or a company's internal systems, in the form of free-form survey replies, emails, and social media comments. Qualitative responses often center on the respondent's thoughts, feelings, and perceptions.

Here are some examples of qualitative feedback:

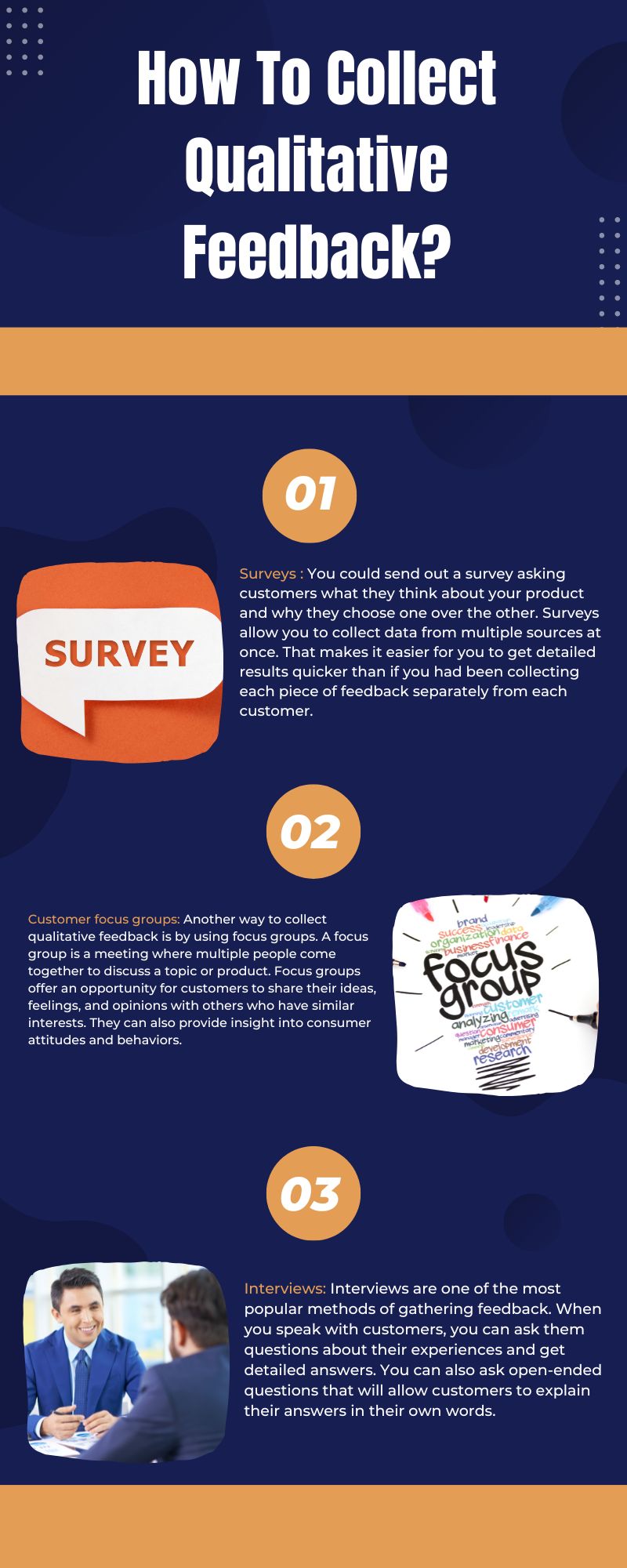

There are several ways to collect qualitative feedback. Here are a few examples:

You could send out a survey asking customers what they think about your product and why they choose one over the other. Surveys allow you to collect data from multiple sources at once. That makes it easier for you to get detailed results quicker than if you had been collecting each piece of feedback separately from each customer.

Another way to collect qualitative feedback is by using focus groups. A focus group is a meeting where multiple people come together to discuss a topic or product. Focus groups offer an opportunity for customers to share their ideas, feelings, and opinions with others who have similar interests. They can also provide insight into consumer attitudes and behaviors.

Bring together several customers and ask them questions about their experiences with your product or service. The facilitator leads the group toward a productive discussion. It can ask questions that encourage participants to share their thoughts and feelings openly with each other.

Interviews are one of the most popular methods of gathering feedback. When you speak with customers, you can ask them questions about their experiences and get detailed answers. You can also ask open-ended questions that will allow customers to explain their answers in their own words.

Qualitative feedback is a powerful way to improve your product or service. It can help you better understand the needs of your customers and identify opportunities for improvement. It also helps to make more informed decisions about what features to build next.

Qualitative feedback is also sometimes called usability testing, customer feedback, or user testing. The idea is that you get real people to give their honest opinions about something — whether it's a website, app, or physical product. You can then use this information to improve your product.

Qualitative Feedback can identify key problems with your product or service that are not able to appear in quantitative data because they're too small or too common to be noticed statistically.

Qualitative feedback is more detailed than quantitative feedback since it allows you to explain exactly what you liked or disliked about something. This makes it more helpful for people who need help improving their services or businesses.

Qualitative feedback is great for:

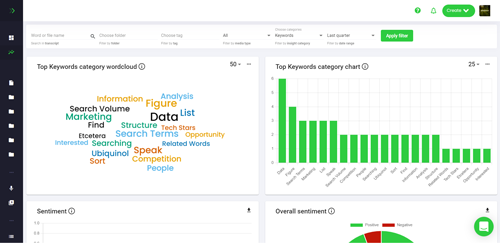

When you're trying to understand the qualitative feedback of your customers, you can use a data analysis tool like Speak Ai.

Speak Ai is a software tool that uses artificial intelligence to analyze audio recordings and provide feedback in real-time.

Speak Ai allows you to analyze your data and provide insights into what your customers are saying about you, your brand and products, and how they feel about their experiences with your company. It also allows you to compare different pieces of feedback from different sources (e.g., social media vs. email). So that you can see which channels are most effective at reaching your audience and garnering positive responses from them.

Now you can join the 7,000+ organizations and people throughout the globe that use Speak Ai as their go-to tool for qualitative feedback analysis. Grab this opportunity to enhance your workflow with a trial or demo today.

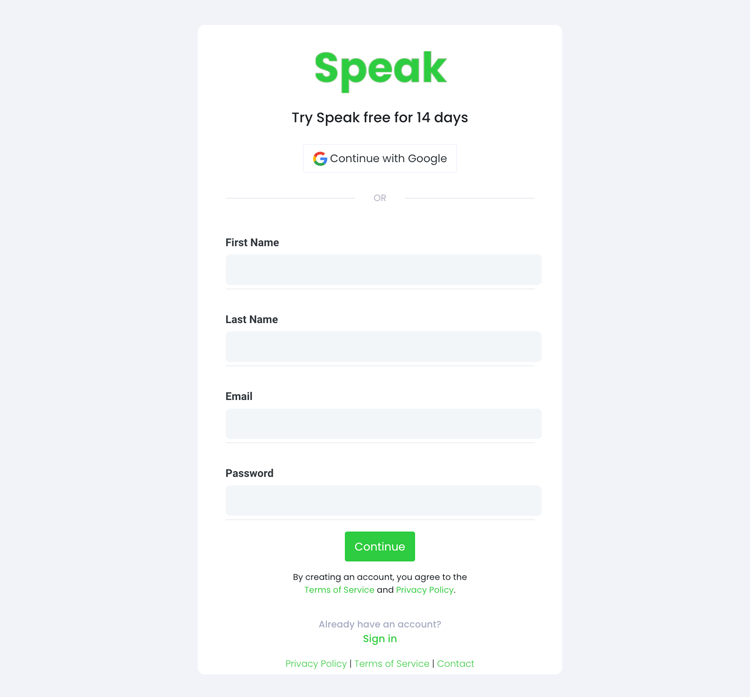

To start your transcription and analysis, you first need to create a Speak account. No worries, this is super easy to do!

Get a 7-day trial with 30 minutes of free English audio and video transcription included when you sign up for Speak.

To sign up for Speak and start using Speak Magic Prompts, visit the Speak app register page here.

We typically recommend MP4s for video or MP3s for audio.

However, we accept a range of audio, video and text file types.

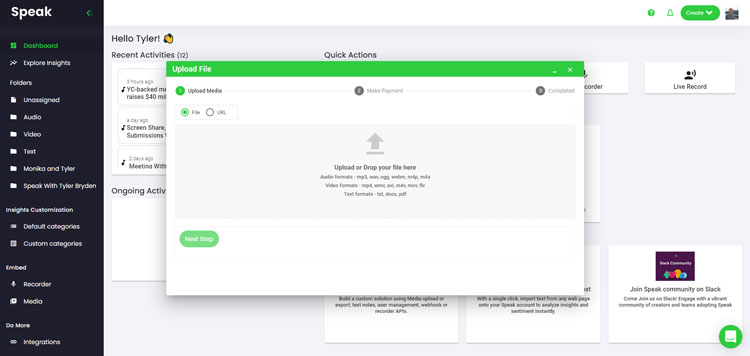

You can upload your file for transcription in several ways using Speak:

You can also upload CSVs of text files or audio and video files. You can learn more about CSV uploads and download Speak-compatible CSVs here.

With the CSVs, you can upload anything from dozens of YouTube videos to thousands of Interview Data.

You can also upload media to Speak through a publicly available URL.

As long as the file type extension is available at the end of the URL you will have no problem importing your recording for automatic transcription and analysis.

Speak is compatible with YouTube videos. All you have to do is copy the URL of the YouTube video (for example, https://www.youtube.com/watch?v=qKfcLcHeivc).

Speak will automatically find the file, calculate the length, and import the video.

If using YouTube videos, please make sure you use the full link and not the shortened YouTube snippet. Additionally, make sure you remove the channel name from the URL.

As mentioned, Speak also contains a range of integrations for Zoom, Zapier, Vimeo and more that will help you automatically transcribe your media.

This library of integrations continues to grow! Have a request? Feel encouraged to send us a message.

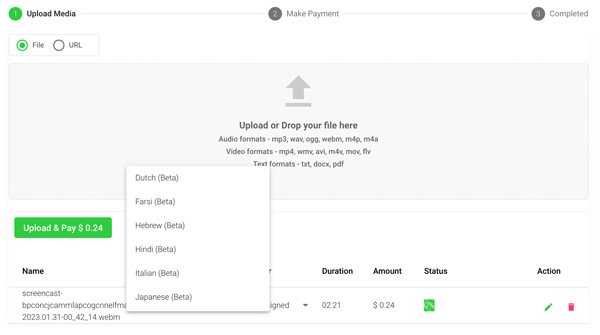

Once you have your file(s) ready and load it into Speak, it will automatically calculate the total cost (you get 30 minutes of audio and video free in the 7-day trial - take advantage of it!).

If you are uploading text data into Speak, you do not currently have to pay any cost. Only the Speak Magic Prompts analysis would create a fee which will be detailed below.

Once you go over your 30 minutes or need to use Speak Magic Prompts, you can pay by subscribing to a personalized plan using our real-time calculator.

You can also add a balance or pay for uploads and analysis without a plan using your credit card.

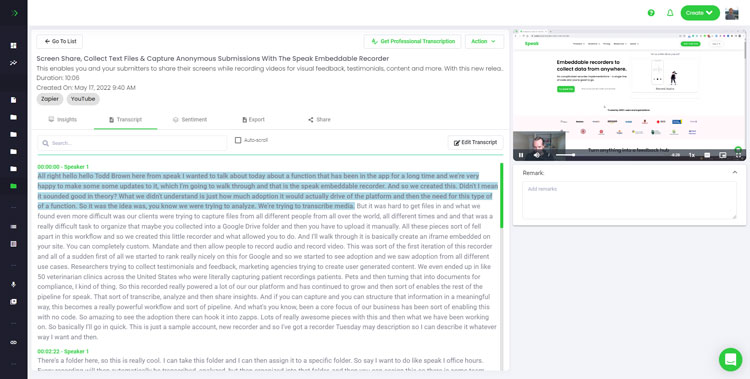

If you are uploading audio and video, our automated transcription software will prepare your transcript quickly. Once completed, you will get an email notification that your transcript is complete. That email will contain a link back to the file so you can access the interactive media player with the transcript, analysis, and export formats ready for you.

If you are importing CSVs or uploading text files Speak will generally analyze the information much more quickly.

Speak is capable of analyzing both individual files and entire folders of data.

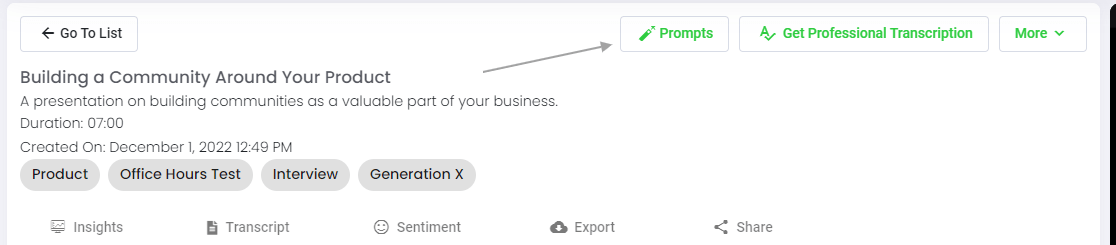

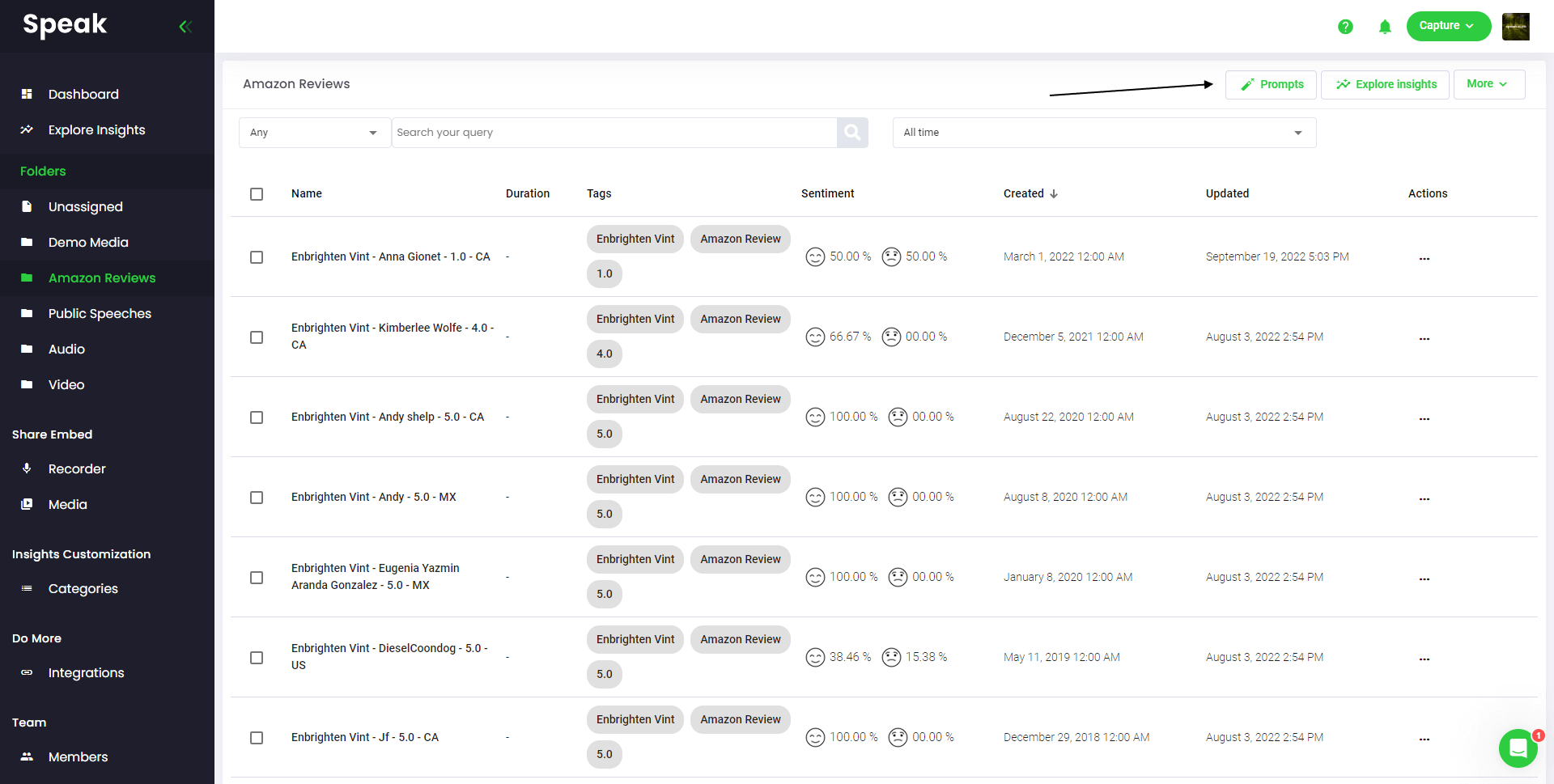

When you are viewing any individual file in Speak, all you have to do is click on the "Prompts" button.

If you want to analyze many files, all you have to do is add the files you want to analyze into a folder within Speak.

You can do that by adding new files into Speak or you can organize your current files into your desired folder with the software's easy editing functionality.

Speak Magic Prompts leverage innovation in artificial intelligence models often referred to as "generative AI".

These models have analyzed huge amounts of data from across the internet to gain an understanding of language.

With that understanding, these "large language models" are capable of performing mind-bending tasks!

With Speak Magic Prompts, you can now perform those tasks on the audio, video and text data in your Speak account.

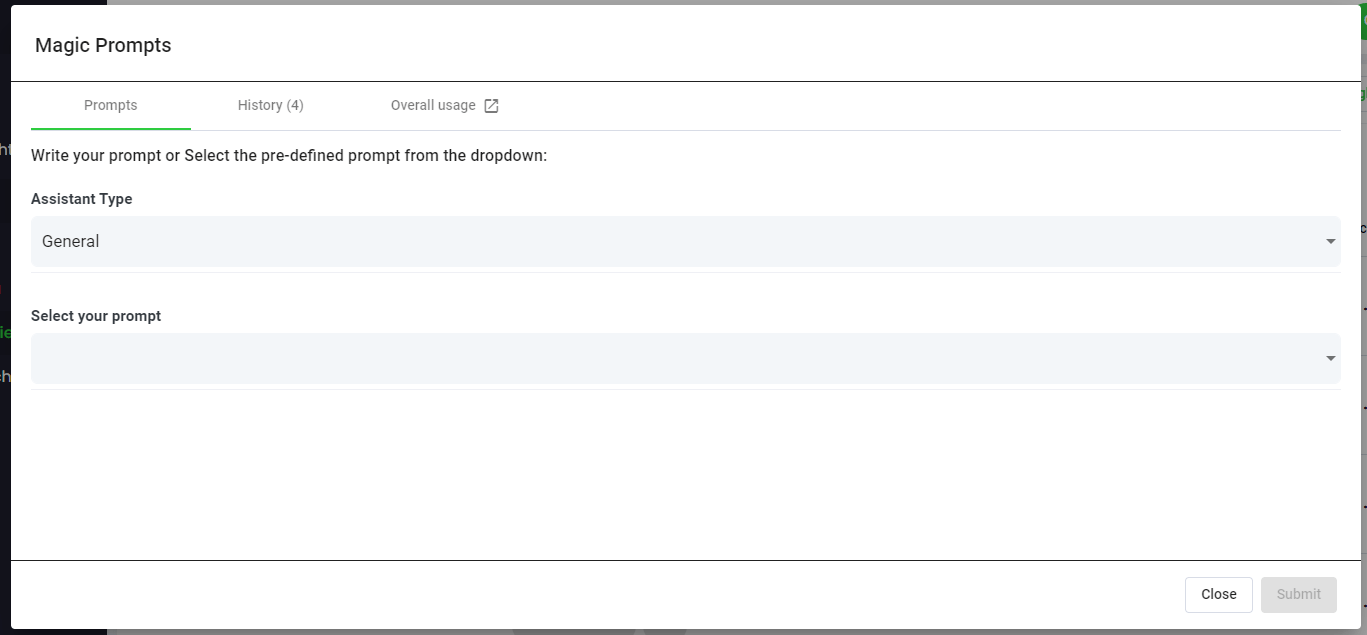

To help you get better results from Speak Magic Prompts, Speak has introduced "Assistant Type".

These assistant types pre-set and provide context to the prompt engine for more concise, meaningful outputs based on your needs.

To begin, we have included:

Choose the most relevant assistant type from the dropdown.

Here are some examples prompts that you can apply to any file right now:

A modal will pop up so you can use the suggested prompts we shared above to instantly and magically get your answers.

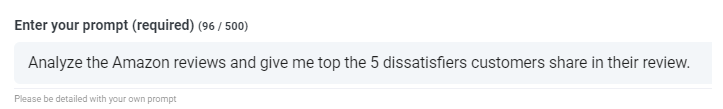

If you have your own prompts you want to create, select "Custom Prompt" from the dropdown and another text box will open where you can ask anything you want of your data!

Speak will generate a concise response for you in a text box below the prompt selection dropdown.

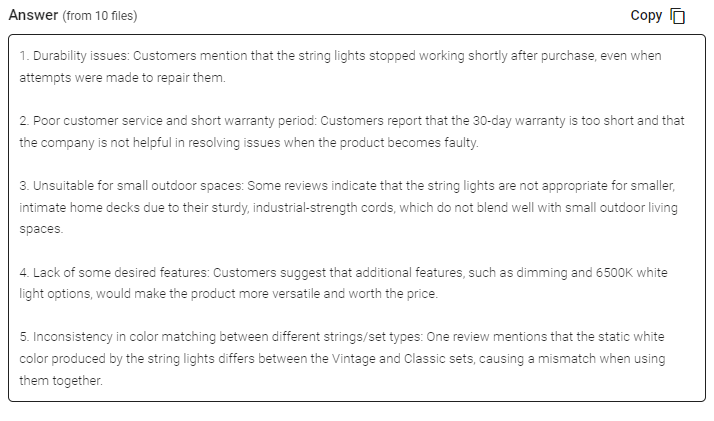

In this example, we ask to analyze all the Interview Data in the folder at once for the top product dissatisfiers.

You can easily copy that response for your presentations, content, emails, team members and more!

Our team at Speak Ai continues to optimize the pricing for Magic Prompts and Speak as a whole.

Right now, anyone in the 7-day trial of Speak gets 100,000 characters included in their account.

If you need more characters, you can easily include Speak Magic Prompts in your plan when you create a subscription.

You can also upgrade the number of characters in your account if you already have a subscription.

Both options are available on the subscription page.

Alternatively, you can use Speak Magic Prompts by adding a balance to your account. The balance will be used as you analyze characters.

Here at Speak, we've made it incredibly easy to personalize your subscription.

Once you sign-up, just visit our custom plan builder and select the media volume, team size, and features you want to get a plan that fits your needs.

No more rigid plans. Upgrade, downgrade or cancel at any time.

When you subscribe, you will also get a free premium add-on for three months!

That means you save up to $50 USD per month and $150 USD in total.

Once you subscribe to a plan, all you have to do is send us a live chat with your selected premium add-on from the list below:

We will put the add-on live in your account free of charge!

What are you waiting for?

If you have friends, peers and followers interested in using our platform, you can earn real monthly money.

You will get paid a percentage of all sales whether the customers you refer to pay for a plan, automatically transcribe media or leverage professional transcription services.

Use this link to become an official Speak affiliate.

It would be an honour to personally jump on an introductory call with you to make sure you are set up for success.

Just use our Calendly link to find a time that works well for you. We look forward to meeting you!

Get a 7-day fully-featured trial.

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Interested in What's New In Speak February 2024? Check out this post for all the new updates available for you in Speak today!

Thank you for continuing to be part of this journey - it means the world to us. Below is a summary of our 2023 at

Interested in The Best Executive Research Firms? Check out the dedicated article the Speak Ai team put together on The Best Executive Research Firms to learn more.

Interested in The Best Consumer Research Firms? Check out the dedicated article the Speak Ai team put together on The Best Consumer Research Firms to learn more.

Powered by Speak Ai Inc. Made in Canada with

Use Speak's powerful AI to transcribe, analyze, automate and produce incredible insights for you and your team.